8500+ Github Stars across 7 Dev Tools: Varnan's Predictable Distribution Engine

Wed, Mar 4, 2026 · 20 min read

TL;DR

- Challenge: Seven open-source projects across hardware hacking, AI infrastructure, security, and developer tools. All with solid tech but limited reach.

- Solution: Strategic video content built for developer audiences, distributed across Instagram and YouTube

- Results:

- LibrePods: 5,500+ stars in 29 days from 600K views + major tech press coverage

- Macless-Haystack: 450+ stars in 3 weeks from 500K views

- CraftGPT: 180+ stars from 343K views

- PicoClaw: ~1,500 stars from 1M+ views, 8K+ comments

- Shannon: ~500 stars from 380K views, 2.8K comments

- AirLLM: ~250 stars from 226K views, 1.7K+ comments

- Pixel Agents: 176 stars in 1 day from 99K views

- Total Impact: 8,500+ GitHub stars generated, 3.7M+ combined views, multiple tech publications covering projects

- Key Finding: About 25-35% of people who comment on our videos end up starring the repo (after accounting for our own replies, which make up 40-50% of total comments). That number has held across all 7 projects.

The Problem Every Open-Source Project Faces

You've shipped something genuinely innovative. Your code is production-ready. Your architecture is elegant. Your documentation is comprehensive.

Nobody knows you exist.

This isn't a hypothetical scenario. This is the reality for 99% of open-source projects and developer tools. The gap between "technical excellence" and "market visibility" isn't just wide, it's a reality that swallows most projects before they reach their full potential.

Traditional developer marketing doesn't work:

- Paid ads burn cash without qualified conversions

- Cold outreach gets ignored or filtered

- Generic "content marketing" drowns in noise

- Product Hunt launches spike for 48 hours, then die

The real question: If you build something developers need, how do you ensure developers actually discover it?

Between October 2024 and March 2026, we ran this exact playbook across seven open-source projects. Each had genuine technical merit. Each had minimal visibility. Each needed a distribution strategy that actually worked.

We engineered content specifically designed to reach qualified technical audiences. Then we measured everything.

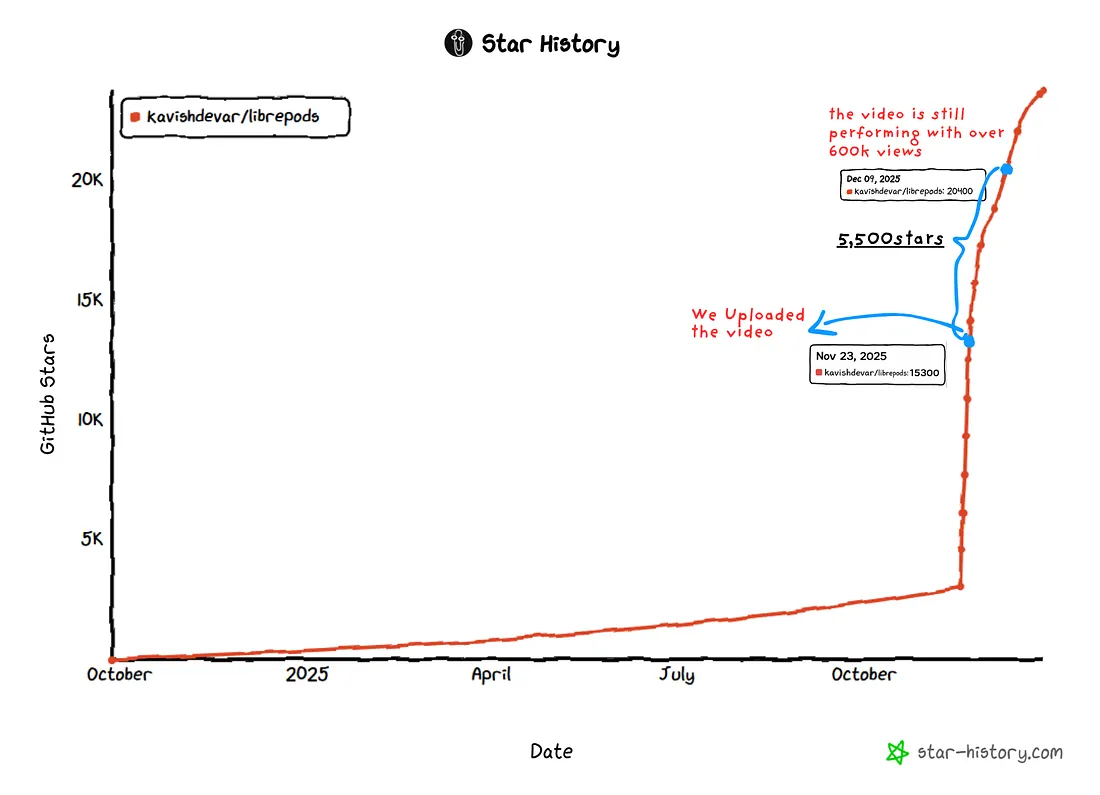

Case Study 1: LibrePods

The Product

LibrePods is an open-source Android and Linux application that reverse-engineers Apple's proprietary AirPods protocols, unlocking iPhone-exclusive features on non-Apple devices.

The project reverse-engineers the Bluetooth protocols that Apple keeps locked to their ecosystem. It implements noise control modes, transparency mode, ear detection, and hearing aid features. It was created by a 15-year-old developer from Gurugram, India, and it requires rooted Android devices with the Xposed framework, which is a legitimate technical barrier that means the project needs qualified users who understand the value proposition.

Target Audience: Android power users, Linux enthusiasts, hardware hackers, developers interested in Bluetooth protocol reverse-engineering, and AirPods owners frustrated with Apple's ecosystem lock-in.

The Problem

Despite having a functional product and legitimate technical innovation, LibrePods faced critical visibility challenges:

- Discovery Gap: Users searching "AirPods Android" found commercial apps or basic Bluetooth tools, not a sophisticated reverse-engineering project

- Legitimacy Barrier: Open-source hardware hacking projects often face skepticism along the lines of "Does this actually work?"

- Adoption Friction: The technical requirements of root access and Xposed framework meant the project needed qualified users who understood the value proposition

Without visibility, even groundbreaking reverse-engineering work remains niche. The project needed mass-market awareness while maintaining technical credibility.

Our Approach

We positioned LibrePods as a human story, showing how a "15-year-old beats Apple and gets AirPods features they won't give you."

The content strategy was a multi-platform video demonstrating screen recordings showing actual noise control mode switching, live demonstration of ear detection and transparency mode, battery status popups matching Apple's UI, and an honest discussion of the technical requirements including root and Xposed.

We posted it to Instagram and YouTube targeting Android power users, hardware hackers, and developers interested in Bluetooth protocol reverse-engineering.

The Results

- December 22, 2024: Addition of 5,500+ new stars in 29 days

- Video Performance: 600,000+ combined views across Instagram and YouTube

- Engagement: 1,500+ comments across platforms

Media Amplification: We didn't pitch journalists. The video demonstrated such clear technical merit that tech reporters found it themselves and wrote unprompted coverage, triggering a ton of secondary sharing across blogs, forums, and developer communities. Here are a few:

- 9to5Mac: "AirPods Pro user found way to unlock iPhone-exclusive features on Android"

- Android Authority: "This free app finally lets your AirPods Pro play nice with Android phones"

- Pocket-lint: "This app makes AirPods work great on Android"

- Digit India: "Gurugram school student builds open-source app... to bring full AirPods features to Android users worldwide"

Contributors started submitting PRs for new features and protocol improvements. Users created installation guides in multiple languages. Media coverage attracted corporate sponsorship interest. Tech press validation converted skeptical users into active community members, and search ranking improved for "AirPods Android" queries.

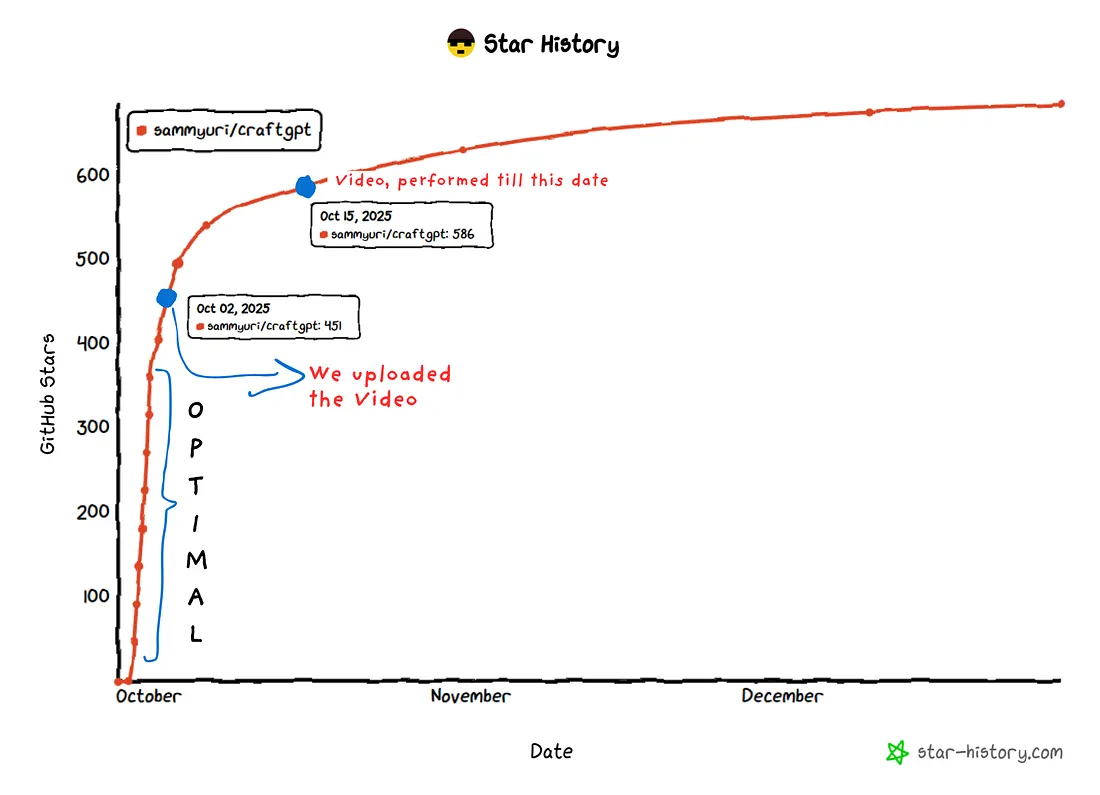

Case Study 2: CraftGPT

The Product

CraftGPT is a small language model built entirely in Minecraft using vanilla redstone mechanics. No mods and no plugins, just pure computational engineering inside a game environment.

The project implements actual neural network architecture using Minecraft's redstone logic gates. It's not a toy. It's a legitimate demonstration of computational theory applied in an unexpected medium.

Target Audience: Computer science enthusiasts, ML engineers interested in foundational concepts, educators teaching neural network architecture, and the Minecraft technical community.

The Problem

CraftGPT existed in complete obscurity. Despite being featured on specialized forums, it had only 451 GitHub stars as of October 2, 2024, with minimal community engagement, no mainstream visibility, and no clear distribution strategy.

The project appealed to a narrow intersection of audiences, specifically ML practitioners who are also Minecraft enthusiasts, making traditional discovery mechanisms ineffective.

Our Approach

We didn't position CraftGPT in fancy terms as "computational theory made tangible." We positioned it simply as "AI in Minecraft."

The content strategy was a single Instagram video plus YouTube reposting, demonstrating the actual redstone architecture implementing neural network layers, real-time token generation in vanilla Minecraft, and the educational value of understanding LLMs by building one within constraints.

We posted it to Instagram and reposted to YouTube targeting developers interested in the intersection of ML fundamentals, gaming, and technical education.

The Results

- October 31, 2024: 631 stars, which is 180 new stars in 29 days for 40% growth

- Current Status: 684 stars, which is 233 total stars generated for 51.6% growth

- Video Performance: 343,000+ Instagram views

- Engagement: Around 500 comments from qualified developers

Developers explicitly cited the video as their discovery mechanism. Project contributions and issue discussions increased. The content got shared across ML education communities, and educational institutions reached out for classroom demonstrations.

Case Study 3: Macless-Haystack

The Product

Macless-Haystack is an open-source project enabling custom AirTag-like tracking devices using Apple's Find My network without needing a Mac.

It reverse-engineers Apple's Find My network protocols, supports different chipsets for DIY tracking devices, and provides an Android app and web frontend for tracking.

Target Audience: Hardware hackers, privacy-conscious users, IoT developers, makers building custom tracking devices, and developers curious about Apple's Find My network.

The Problem

Despite solving a genuine technical problem of accessing Find My without Apple hardware, Macless-Haystack faced critical barriers:

- Use Case Clarity: Potential users didn't immediately understand why they'd want this

- Technical Complexity: The setup process intimidated non-expert users

- Competitive Noise: OpenHaystack and similar projects created confusion

- Trust Barrier: The question "Does this actually work with Apple's network?" came up constantly

The intersection of "hardware hackers" plus "Apple ecosystem users" plus "DIY tracking needs" was too narrow for organic discovery.

Our Approach

We repositioned Macless-Haystack from "technical curiosity" to "practical privacy tool." The core narrative was simple: build your own AirTags for $3 instead of $29, track anything you want, no Apple device required, and have full control over your data.

The key value propositions were cost (chipset cost of $3 to $5 compared to $29 AirTags), privacy (your tracking data under your control), customization (track anything from bikes to drones to luggage to pets), and independence (no Mac or iPhone required to set up or manage).

We targeted hardware hacking forums like Reddit's r/esp32 and r/arduino, privacy-focused communities, maker spaces and DIY electronics groups, and drone and RC hobbyist communities.

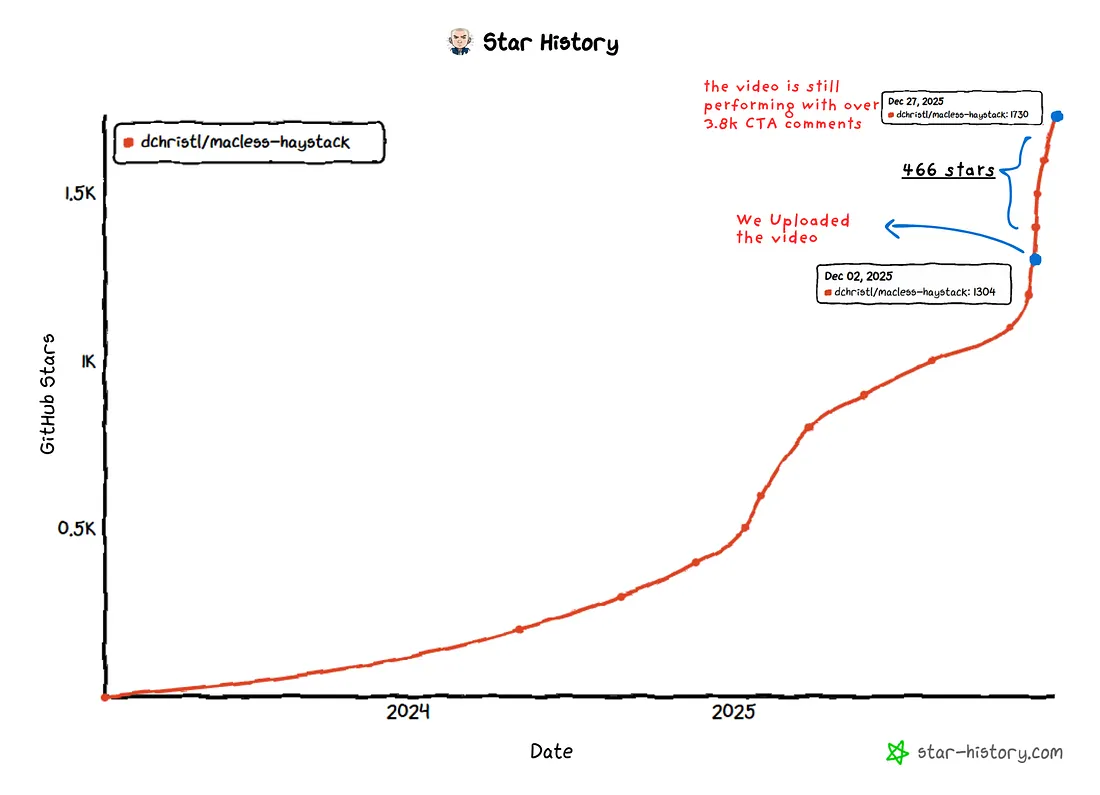

The Results

- Video Upload: December 2, 2024

- Current Status: 1,666 stars, which is 466 new stars in roughly 3 weeks for 38.8% growth

- Video Performance: 500,000+ combined views across Instagram and YouTube

- Engagement: 3,500+ comments asking technical questions

Hardware enthusiasts started sharing their custom builds. Issue reports increased which led to bug fixes. Feature requests came in from real-world use cases, and documentation improved from community contributions. Educational institutions also started using it for teaching.

The project went from "obscure technical experiment" to "definitive open-source solution for DIY tracking" in three weeks.

Case Study 4: PicoClaw

The Product

If you've been following the open-source AI agent space, you've probably heard of OpenClaw (previously known as ClawdBot). It's a personal AI assistant that can clear your inbox, send emails, manage your calendar, and even check you in for flights, all from WhatsApp, Telegram, or any chat app. It's powerful stuff.

But OpenClaw is resource-hungry. We're talking 100MB+ memory footprint and 500+ second startup times, so you basically need a Mac mini or a decent server to run it properly.

PicoClaw, built by Sipeed, is a Go rewrite that was inspired by nanobot (which was itself an ultra-lightweight Python alternative to OpenClaw). PicoClaw takes the core idea of having an OpenClaw-style personal AI assistant and makes it run on hardware that costs $10 with less than 10MB of RAM.

How it compares to OpenClaw:

- Memory: 99% smaller, less than 10MB compared to 100MB+

- Startup: 400x faster, 1 second compared to 500+ seconds

- Cost: 98% cheaper, runs on $10 RISC-V hardware compared to needing a Mac mini

Who cares about this: Self-hosting enthusiasts, embedded systems hobbyists, developers who want an OpenClaw-style AI agent but don't have expensive hardware, and anyone with old devices collecting dust.

The Problem

PicoClaw launched on February 9, 2026. It got early attention from Sipeed's existing hardware community, but that's a small world. The real audience, which is developers who want lightweight AI agents, simply didn't know it existed.

The main issues:

- "AI agent" means something else to most people. They think of heavy cloud infrastructure, not something running on a $10 board

- Sipeed is known as a hardware company. Nobody is browsing their GitHub expecting to find an AI agent project

- "AI on 10MB RAM" sounds too good to be true. Developers who work with LLMs know that models are huge, so this claim naturally invites skepticism

Our Approach

We didn't lead with specs or comparisons to OpenClaw. Instead, we led with the visual and showed PicoClaw actually running on tiny hardware, doing real things, in real time. The video demonstrated it running on actual Sipeed hardware with real-time interaction, and we also showed the old phone resurrection angle where you can turn a decade-old device into an AI assistant.

We posted it to Instagram and YouTube.

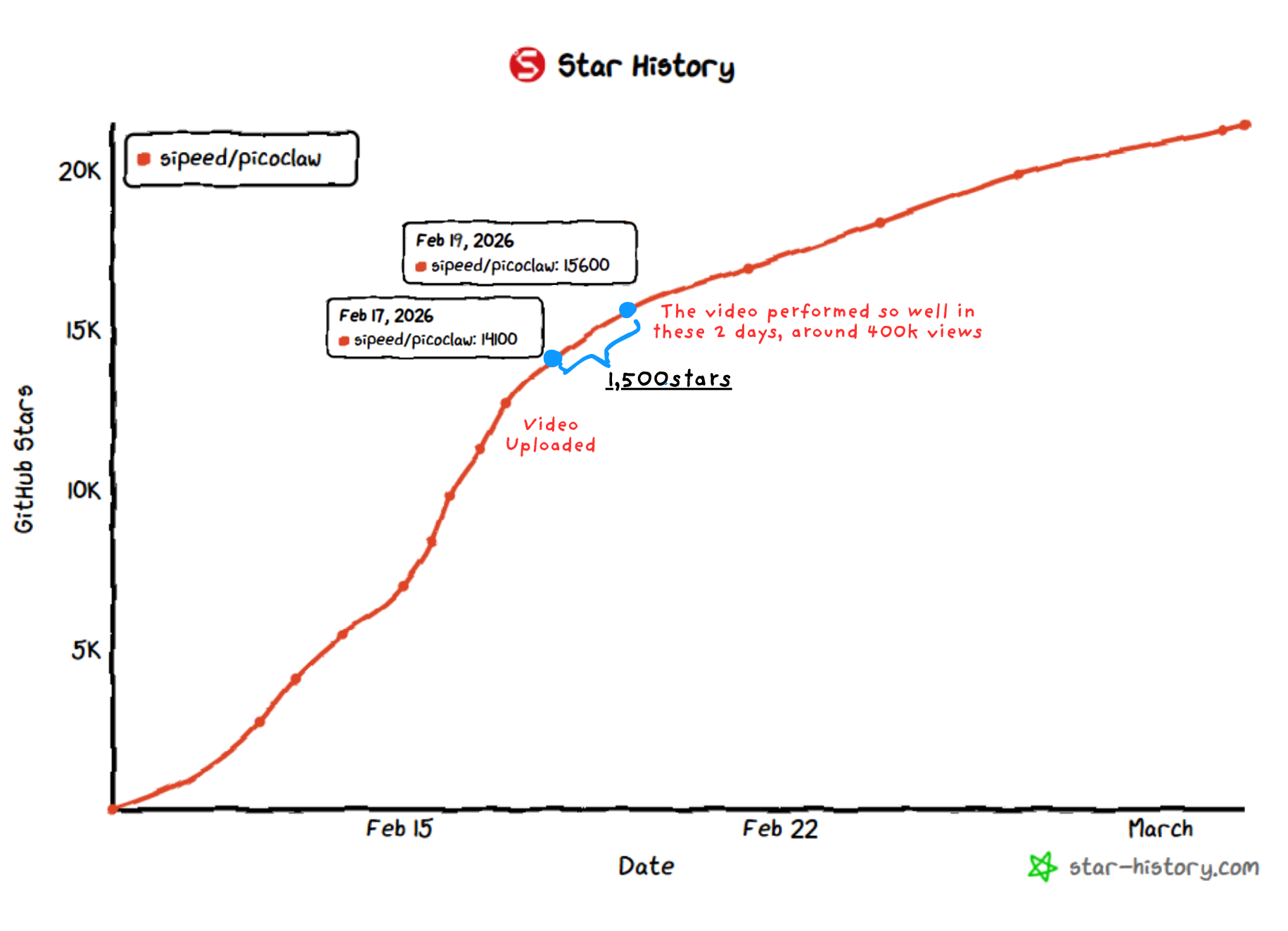

The Results

- Video Upload: February 17, 2026

- Stars Gained: ~1,500 (estimated at around 34% conversion from comments)

- Views: 1,000,000+ combined across Instagram and YouTube

- Comments: 8,000+ across platforms

- Repo Growth: From 14,100 stars on Feb 17 to 20,000+ by early March

The video is still performing and has crossed 1M+ views on both Instagram and YouTube combined.

Case Study 5: Shannon

The Product

Shannon is a fully autonomous AI pentester built by KeygraphHQ. Unlike traditional security scanners that just throw alerts at you, Shannon actually hacks your web app to prove that vulnerabilities are real. It autonomously hunts for attack vectors in your source code, then uses its built-in browser to execute real exploits like injection attacks and auth bypass, and finally hands you a concrete proof-of-concept showing that the vulnerability is actually exploitable.

Shannon Lite has achieved a 96.15% success rate on the hint-free, source-aware XBOW benchmark. It runs fully containerized via Docker with Temporal-based workflow orchestration, so you can launch a pentest with a single command.

Shannon is a core component of the Keygraph Security and Compliance Platform. While Shannon handles the penetration testing side, the broader platform automates your entire compliance journey from evidence collection to audit readiness. The core pitch makes a lot of sense: thanks to tools like Claude Code and Cursor, development teams are shipping code every single day, sometimes every hour. But their penetration test still happens once a year. That creates a massive security gap, and Shannon is designed to close it by acting as your on-demand whitebox pentester.

To show what it can actually do, Shannon discovered 20+ critical vulnerabilities in OWASP Juice Shop, including complete auth bypass and database exfiltration.

Who cares about this: Security engineers, DevSecOps teams, CTOs at startups shipping fast with AI coding tools, penetration testers looking for automation, and compliance teams needing continuous security validation.

The Problem

- Security tools need extraordinary trust. When you hear "autonomous AI hacker," most people think of it as a liability rather than a solution. Security is one of those spaces where you need to prove your tool works before anyone will let it near their codebase.

- The appsec market is incredibly noisy. There are tons of scanners out there that just generate false positives. Shannon's core difference, which is actual exploit execution rather than just alert generation, isn't something you can easily communicate through a GitHub README alone.

- Security developers hang out in different places. They're not scrolling the same feeds as general dev tool users, so reaching them requires different channels.

Our Approach

The video showed Shannon actually finding and exploiting real vulnerabilities. Not just alerts or warnings, but actual exploits with proof of compromise. We used the 96.15% XBOW benchmark number as the credibility anchor to show that this isn't just a toy.

We posted it to Instagram.

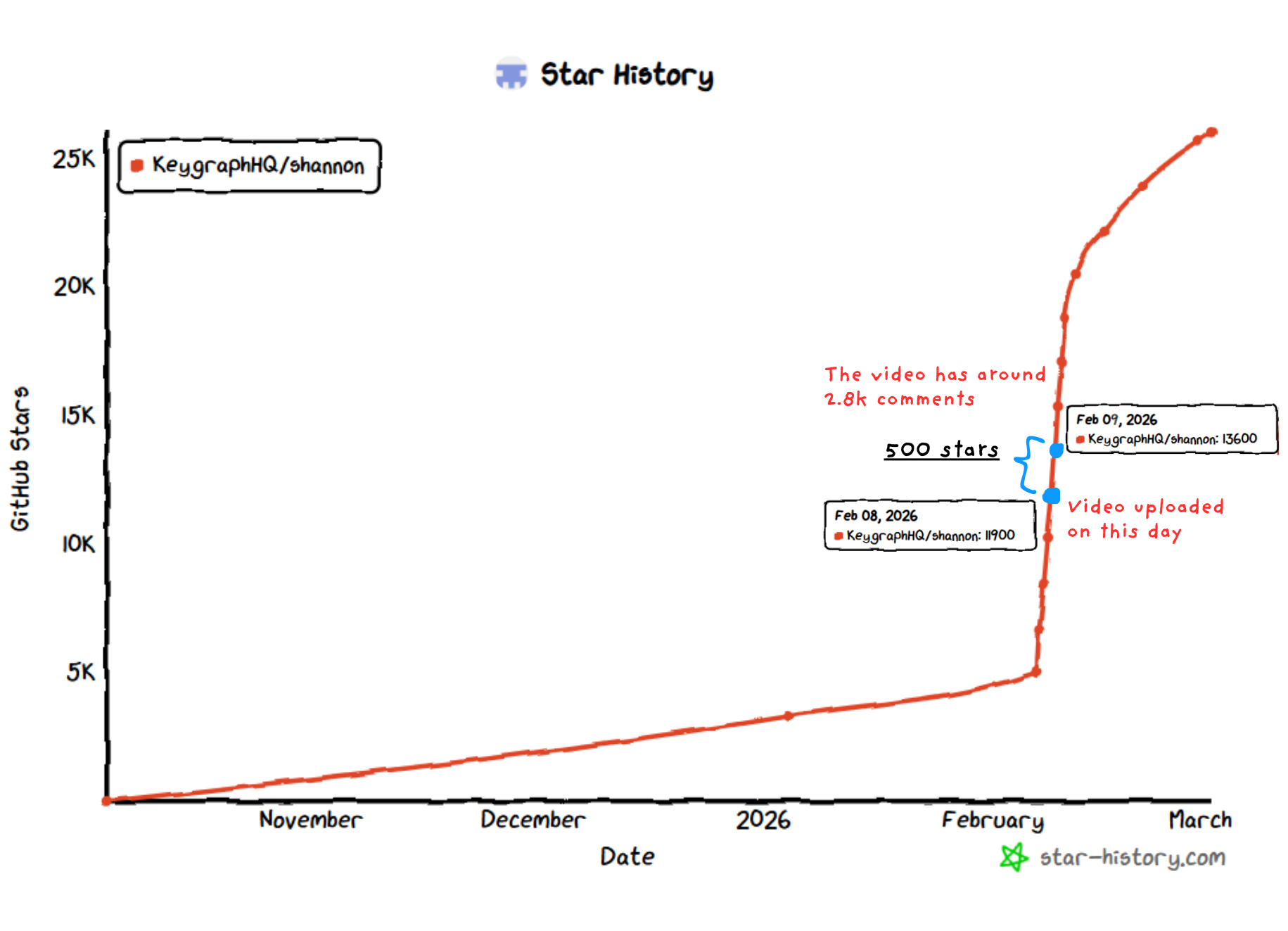

The Results

- Video Upload: February 8, 2026

- Stars Gained: ~500 (at roughly 32% conversion from comments)

- Views: 380,000+ on Instagram

- Comments: 2,800

- Repo Growth: From 11,900 stars on Feb 8 to 13,600+ by Feb 9, with continued growth after that

We have increased around 500+ stars on this project.

Case Study 6: AirLLM

The Product

AirLLM is a Python library that optimizes inference memory usage, allowing 70B large language models to run inference on a single 4GB GPU card. And here's the important part: it does this without any quantization, distillation, or pruning, so there's no degraded model performance. The way it works is by loading one transformer layer at a time into GPU memory during inference.

It doesn't stop at 70B either. You can run Llama 3.1 405B on 8GB VRAM. The library supports a wide range of models including Llama 3, ChatGLM, QWen, Baichuan, Mistral, and InternLM. It works on macOS, Linux, and even CPU-only setups. There's also a prefetching system that overlaps model loading and computation for about a 10% speed improvement, and you can optionally use 8-bit or 4-bit quantization if you want additional speed.

The project was created by Gavin Li and has been around since 2023, with regular updates adding support for newer models and architectures over time.

Who cares about this: Anyone who wants to run big models locally but can't afford expensive GPUs. This includes AI hobbyists, students, researchers with consumer hardware, and the "GPU poor" crowd who want to experiment without cloud costs.

The Problem

AirLLM already had around 12,600 stars at the time, which is solid traction. But the growth had completely flatlined through 2025, and the project needed fresh eyes to break out of the plateau.

- Too many competitors in the "run LLMs locally" space. There's llama.cpp, Ollama, vLLM, and many others. AirLLM's unique approach of requiring no quantization was getting buried under all the noise.

- Organic discovery had dried up. The star growth curve had gone completely flat, which means the GitHub algorithm and social platforms had stopped surfacing it to new developers.

- Most developers assumed 70B models need expensive hardware. They simply didn't know that AirLLM existed as an option that could run these models on a 4GB GPU without compromises.

Our Approach

The hook was straightforward: "Run a 70B model on your 4GB Laptop GPU."

The video showed actual inference running on consumer hardware. We showed how the API is just a few lines of Python code, making it super accessible. We also highlighted the Llama 3.1 405B support to show the full range of what it can do.

We posted it to Instagram and YouTube.

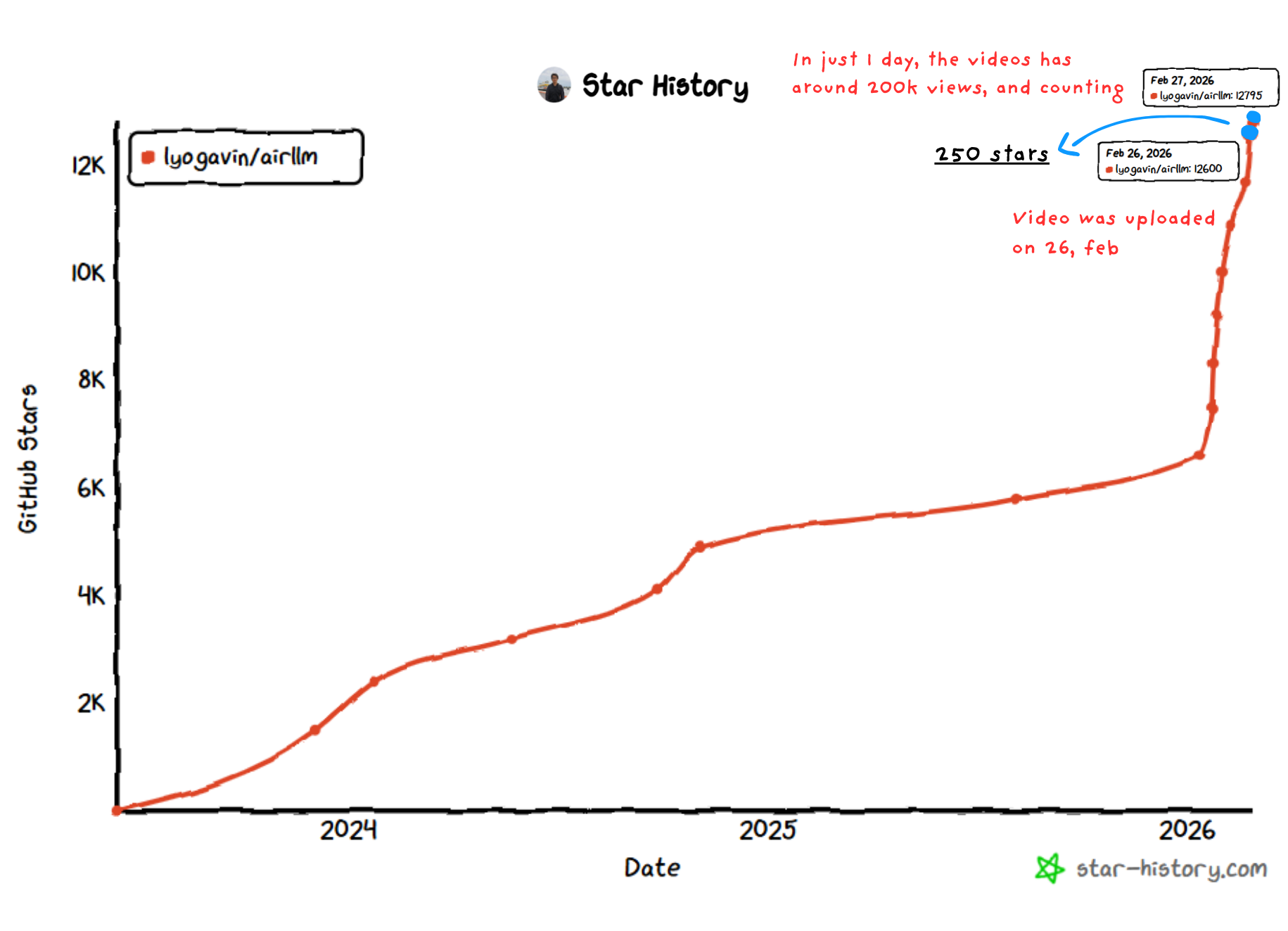

The Results

- Video Upload: February 26, 2026

- Stars Gained: ~250 (at around 27% conversion from comments)

- Views: 226,000+ combined across Instagram and YouTube

- Comments: 1,700+

- Repo Growth: From 12,600 on Feb 26 to 13,100+ by March

Note: the screenshot was taken on Feb 27 and the repo currently has around 13.1k stars. The video is still performing well on Instagram.

Case Study 7: Pixel Agents

The Product

Pixel Agents is a VS Code extension that turns your Claude Code agents into animated pixel art characters living in a virtual office. Every time you open a Claude Code terminal, it spawns a character that walks around the office, sits at desks, and visually reflects what the agent is actually doing in real time. When the agent is writing code, the character types. When it's searching files, the character reads. When it needs your attention or is waiting for permission, the character shows a speech bubble.

The way it works is by watching Claude Code's JSONL transcript files to track what each agent is doing. No modifications to Claude Code are needed since it's purely observational. Under the hood, the extension runs a lightweight game loop with canvas rendering, BFS pathfinding, and a character state machine that handles transitions between idle, walking, typing, and reading states. Everything is pixel-perfect at integer zoom levels.

There's also a built-in office layout editor where you can design your own workspace. The grid is expandable up to 64x64 tiles, and you can place floors, walls, and furniture. The extension supports full persistence for agent states and office layouts across sessions. It's built with TypeScript, the VS Code Webview API, and esbuild.

Who cares about this: Claude Code users, VS Code enthusiasts, and anyone who appreciates creative developer experience touches.

The Problem

- "Is this useful?" is the first question everyone asks. Pixel art characters for your AI agents sounds fun, but most developers instinctively dismiss anything that isn't strictly productive.

- VS Code marketplace search favors utility extensions. Creative developer experience projects like this don't surface naturally through the marketplace's discovery mechanisms.

- It was a brand new project with zero community. No backlinks, no existing users, and no history of any kind to help spread the word.

Our Approach

We didn't try to justify productivity or make it sound more serious than it is. Instead, we leaned into the fun and showed the visual spectacle of pixel characters reacting to real coding activity. The video showed agents being spawned, characters walking to their desks, the layout editor in action, and that satisfying one-agent-one-character mapping that makes multi-agent workflows visible.

We posted it to Instagram only, and it hasn't been uploaded to YouTube yet.

The Results

- Video Upload: March 1, 2026

- Stars Gained: 176 (we have clear proof with before and after star-history screenshots)

- Views: 99,000+ on Instagram alone

- Comments: 880

- Repo Growth: From 2,300 on March 1 to 2,479 by March 2, all in just one day

We have increased around 176 stars on this repo in just one day, which puts the conversion rate at about 36%. The video is still performing good and hasn't been uploaded on YouTube yet.

The Pattern: What Actually Drives Developer Adoption

After analyzing all seven case studies, we've identified the exact formula that converts views into GitHub stars, community activation, and sustained project growth.

1. Technical Credibility Through Infotainment

We don't create promotional content. We create technical analysis that developers actually want to watch.

- Demonstrate Real Value

- Explain the Technology

- Maintain Entertainment Value

- Be Honest About Limitations

The result is that developers share content that teaches them something valuable. Educational value drives organic reach.

2. Video Optimization for Developer Audiences

The algorithm favors high engagement, and comments are what drive the distribution boost. Short-form forces clarity because you have 60 to 90 seconds to demonstrate value. And the visual medium is perfect for product demonstrations.

3. The Multiplication Effect: Content to Coverage to Legitimacy

Here's what most founders miss. One piece of high-quality content doesn't just generate views. It triggers a cascade:

- Phase 1: Initial Video Release

- Phase 2: Community Sharing

- Phase 3: Media Pickup

- Phase 4: Search Optimization

- Phase 5: Sustained Growth

The 25-35% Number

Here's something important to understand about our comment numbers. We actively reply to a large portion of comments on every video, and roughly 40-50% of the total comment count is actually our own replies. So the raw comment number isn't the real audience size.

When you account for that, the actual conversion rate is that about 25-35% of the people who comment on our videos end up starring the repo. That has held across different project types including AI, security, hardware, and developer experience tools. It has also held across different video sizes ranging from 99K views to over 1M, across different platforms whether Instagram-only or Instagram plus YouTube, and across different repo maturity levels from brand new projects to repos with 12K+ stars.

Comments Matter More Than Views

Views tell you how many people saw it. Comments tell you how many people actually cared. And stars follow the people who cared.

One Video Permanently Raises the Baseline

Every project shows the same pattern after our video goes up:

- Day 1 to 3: There's a big spike from direct video traffic

- Week 1 to 2: Continued growth from secondary sharing across platforms

- Month 1 to 3: The organic growth rate stays permanently higher than the pre-video levels

It's not a one-time bump. The video resets where the project sits in the discovery ecosystem.

The Numbers

A quick note on how to read this table: the "Comments" column is the raw number from the platform, which includes our replies. We reply to around 40-50% of all comments, so the actual number of people commenting is lower. The "Star %" is calculated after accounting for that.

| Project | Views | Comments | Stars Gained | Star % |

|---|---|---|---|---|

| LibrePods | 600K | 1,500+ | 5,500+ | ~30% from comments + media amplified |

| Macless-Haystack | 500K | 3,500+ | 450+ | ~23% |

| CraftGPT | 343K | 500 | 180+ | ~65% |

| PicoClaw | 1M+ | 8,000+ | ~1,500 | ~34% |

| Shannon | 380K | 2,800 | ~500 | ~32% |

| AirLLM | 226K | 1,700+ | ~250 | ~27% |

| Pixel Agents | 99K | 880 | 176 | ~36% |

| Total | 3.7M+ | 18,880+ | ~8,556+ | 25-35% avg |

NOTE: LibrePods' star count far exceeds what comments alone would generate, since the video triggered unprompted media coverage from 9to5Mac, Android Authority, Pocket-lint, and others, which massively amplified the reach beyond the video audience. The ~30% estimate only accounts for the comment-driven portion. CraftGPT's unusually high percentage is likely due to the very niche and highly qualified audience that engaged with that content.

Frequently Asked Questions

No. Open-source projects are where the results are most visible because GitHub stars give us a clear, public metric to point at. But we work with all kinds of AI and developer tool companies, including closed-source SaaS products, developer platforms, and API-first businesses. The core strategy is the same: we create technical video content that reaches qualified developers and drives them to take action. For open-source projects that action is starring and contributing. For SaaS products it's signups, waitlist joins, or demo requests. The distribution engine works regardless of whether your code is on GitHub or behind a login page.

Our Guarantee: 90 Days or Free

We work exclusively with AI and developer tool companies. Our process:

Month 1: Positioning

- Deep market and competitor signal mapping

- Founder-led positioning and narrative workshop

- Clear messaging hierarchy and value propositions

- High-signal customer interviews to validate demand

Month 2: Launching and Testing, Doing whatever's needed

- Technical content built to rank and compound on Google

- Product-led assets (templates, tools, interactive demos)

- Founder thought leadership designed for LinkedIn distribution

- Structured launch sequences for major product releases

- Launching Paid Campaigns to ensure growth if required

Month 3: Launch & Measurement

- Execute targeted distribution

- Monitor conversion metrics

- Optimize based on performance data

- Deliver final results report

If we don't deliver measurable results (GitHub stars, qualified leads, contributor growth, or other agreed-upon metrics) within 90 days, we work for free until we do.

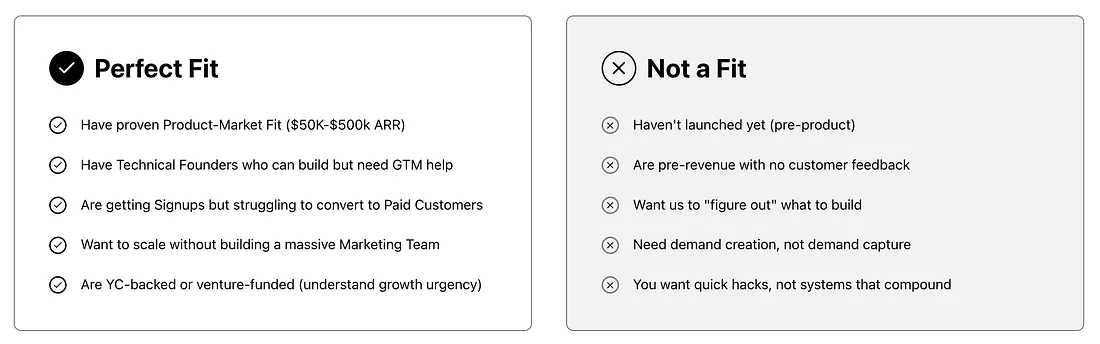

Are you a Good Fit?

We don't work with everyone. We work best with founders who have a product, traction, and are ready to accelerate growth.

If you've built something worth discovering, we'll help the right people find it.

Book a strategy session

We'll analyze your product positioning, identify your target audience, and outline a 90-day plan to measurable results. Start your journey by booking a free call.